Description#

I wrote this master’s thesis at the Hochschule der Medien Stuttgart during my working student position at Fraunhofer IPA. It was supervised by Prof. Dr. Bernhard Eberhardt and Dr.-Ing. Ira Effenberger with kind support from M.Sc. Frederik Seiler.

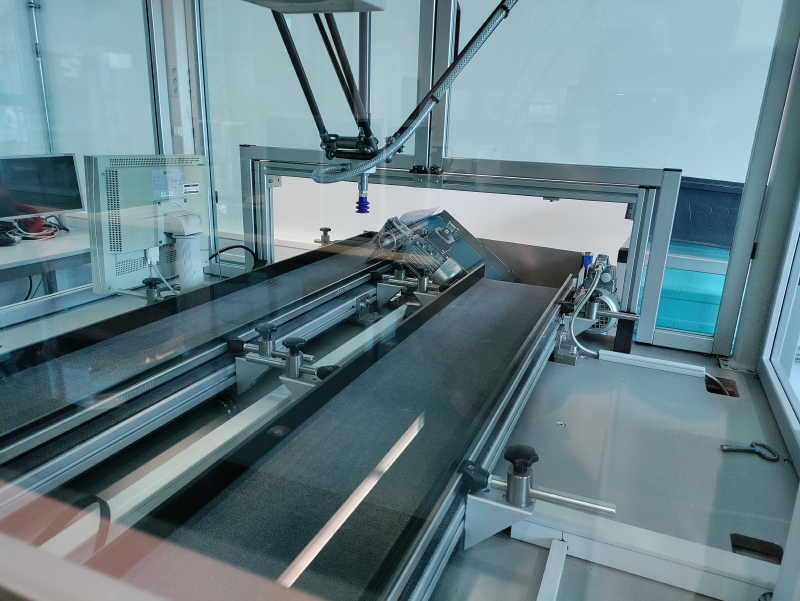

The thesis presents an AI-based approach for 6D object pose estimation in RGB images without manual annotation effort. The method uses cross-modal knowledge distillation, where a teacher model trained on synthetic depth maps transfers knowledge to a student model that operates on real RGB images.

Key innovations include:

- Annotation-free training: Eliminates manual 3D pose labeling in 2D images through synthetic data generation

- Cross-modal knowledge transfer: Teacher model trained on synthetic depth maps distills knowledge to student model for RGB inference

- Practical applicability: Enables pose estimation when 3D sensors are not feasible due to cost, cycle rate, or installation constraints

- Automated pipeline: Requires only camera and 3D sensor calibration, with comprehensive evaluation of rotation representations and augmentation techniques

The approach successfully demonstrates high accuracy pose estimation in real RGB images, offering a promising solution for reducing manual annotation effort in robotics applications.

Technologies#

- EfficientDet: The backbone object detection model.

- PyTorch: Implementation of pose estimation head networks.

- NumPy: Used for data manipulation and numerical operations.

- Ray Tune: Hyperparameter optimization and training management.

- PyPylon: The Basler camera SDK used for capturing images.

- iCon API: Used for accessing the 3D sensor data.

- Blender: Scripted to generate synthetic depth maps and images.

- OpenCV: Mainly used for sensor calibration and image preprocessing.

- Open3D: Used for visualization and sensor calibration.

- Jupyter Notebook: Evaluation and visualization of results.

Links#

Confidential

Thesis and repository are confidential until 2025-12-06. I will update this page after the embargo period and include further details about the thesis and the repository.